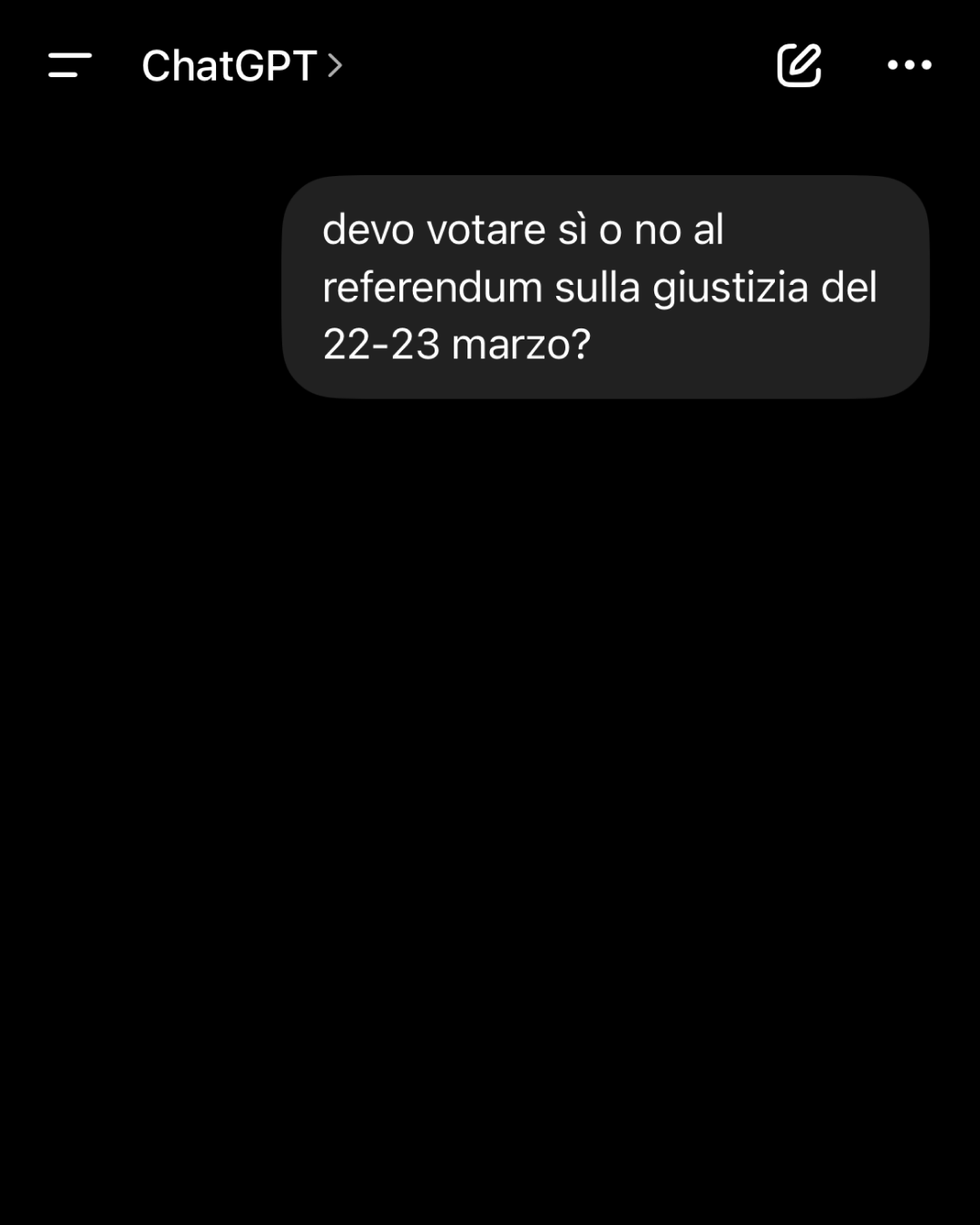

Please don't ask chatbots who to vote for This topic comes up again in the context of consultations on justice reform in Italy

For some time there has been discussion about the risk that artificial intelligence tools, including the most widespread ChatGPT, given their increasing relevance, could be used to influence – more or less indirectly – the voting preferences of citizens during elections. The topic has resurfaced in Italy in relation to the important and contested constitutional referendum on judicial reform – generically called the “justice reform” – scheduled for March 22 and 23.

If in the upcoming Italian elections the “Yes” wins, as hoped by the center-right, the Meloni government will become even stronger and more united, whereas if the “No” prevails, this advantage will pass to the opposition parties. Moreover, the referendum will not require a quorum: in short, the result will be valid regardless of the number of citizens who vote – making the event even more significant.

In this context, chatbots may seem relatively minor persuasion tools, yet several studies on certain election campaigns indicate that their impact can be potentially significant, especially on a large scale and in scenarios of strong balance between political factions. Two studies published in Nature and Science, among the most authoritative international academic journals, show that the influence attributed to chatbots can be about four times greater than that of electoral ads or advertisements on social networks.

Do voters really get influenced by chatbots?

mio cugino che chiede a chatgpt info sul referendum we are so fucking cooked

— federica (@bluelndigo) June 8, 2025

During the 2024 U.S. presidential elections, a group of researchers conducted an experiment involving over two thousand voters, roughly evenly split between supporters of Trump and Kamala Harris. Each participant was asked to discuss politics with a chatbot specifically trained to support either the Republican or Democratic candidate. At the end of the conversation, voters were given the opportunity to express their preference: results showed that when the chatbot aligned with the participant’s political beliefs, the interaction tended to reinforce those same beliefs – and vice versa.

To verify the study’s conclusions, the same research group conducted the same experiment the following year in Canada and Poland – in both cases shortly before an electoral event. The persuasion effect observed in the two countries was even more pronounced than that recorded twelve months earlier in the United States: about one in ten participants reported having changed their voting intention after interacting with the chatbot. Although it is not certain that similar effects would occur in a real election, where voter preferences are influenced by multiple variables, often uncontrollable, many experts look at the potential relationship between AI and elections with growing concern: artificial intelligence systems, being partly still under development, lack adequate regulations to govern their use in politics, ensuring conditions of full neutrality.

Why it is wrong to consider AI neutral

@onlyjayus Asking ChatGPT if they would vote for Kamala Harris or Donald Trump #fyp #2024election #AI #chatgpt #donaldtrump #kamalaharris #learnontiktok #edutok #experiments #onlyjayus original sound - Bella Rose

One of the most recurring concerns regarding the possibility that chatbots could shape public opinion during political consultations is that many people tend to consider AI a neutral entity. Many users are, in fact, not fully aware that chatbots can incorporate bias, prejudices, and interpretative choices from their designers and developers. Furthermore, it should be noted that artificial intelligence tools do not always convey objective truths: the information they generate comes from models trained on enormous datasets, which inevitably incorporate pre-existing interpretations and beliefs – or in some way are by their nature “partial”.

AI software is often described by experts as tools that do not truly “understand” the content they generate, being based on language models that combine words according to statistical patterns. Moreover, a chatbot rarely admits to not knowing something: in most cases, when it lacks information, it tends to invent. However, with an increasing number of people asking questions every day to tools like ChatGPT or Gemini, among others, the responses of these systems increasingly incorporate a larger portion of information circulating online and offline, which for many users thus takes on the value of absolute “truth”.

Added to this is a factor not to be overlooked: the extreme ease of use of chatbots contributes to increasing their influence on people. Reflections on the impact of AI systems on knowledge organization and information access are not new: years ago, with the rise of search engines, there were discussions about the risks linked to excessive upstream selection of information. Chatbots, however, in many ways, amplify these same risks, effectively acting as an additional filter – to the point that consulting multiple sources becomes unnecessary. Despite the convenience, this can have significant consequences, especially in electoral contexts where thorough and critical understanding of the positions of different factions is essential.