What is “AI nationalism”? Every day, we are moving ever closer to a dystopia

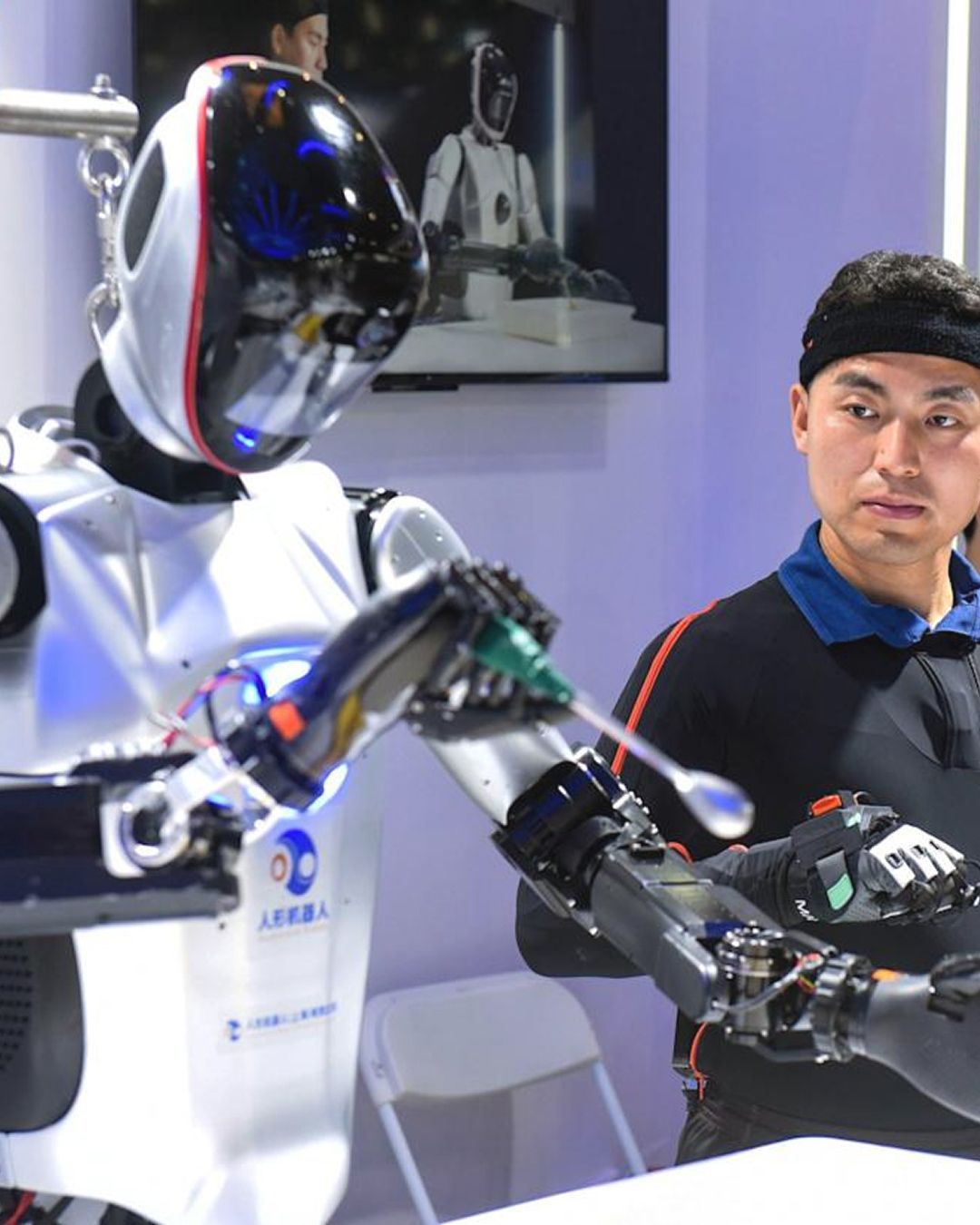

While the general public uses AI to create surreal videos of cats, psychoanalyze themselves, or even shop, the world's major powers are discovering that this technology has far broader applications, to say the least sinister ones, both in administrative and military fields. There is only one problem: all the main AI models developed today are American and Chinese, and therefore using them for governmental purposes raises several disturbing doubts about national security and the true use of the data they employ.

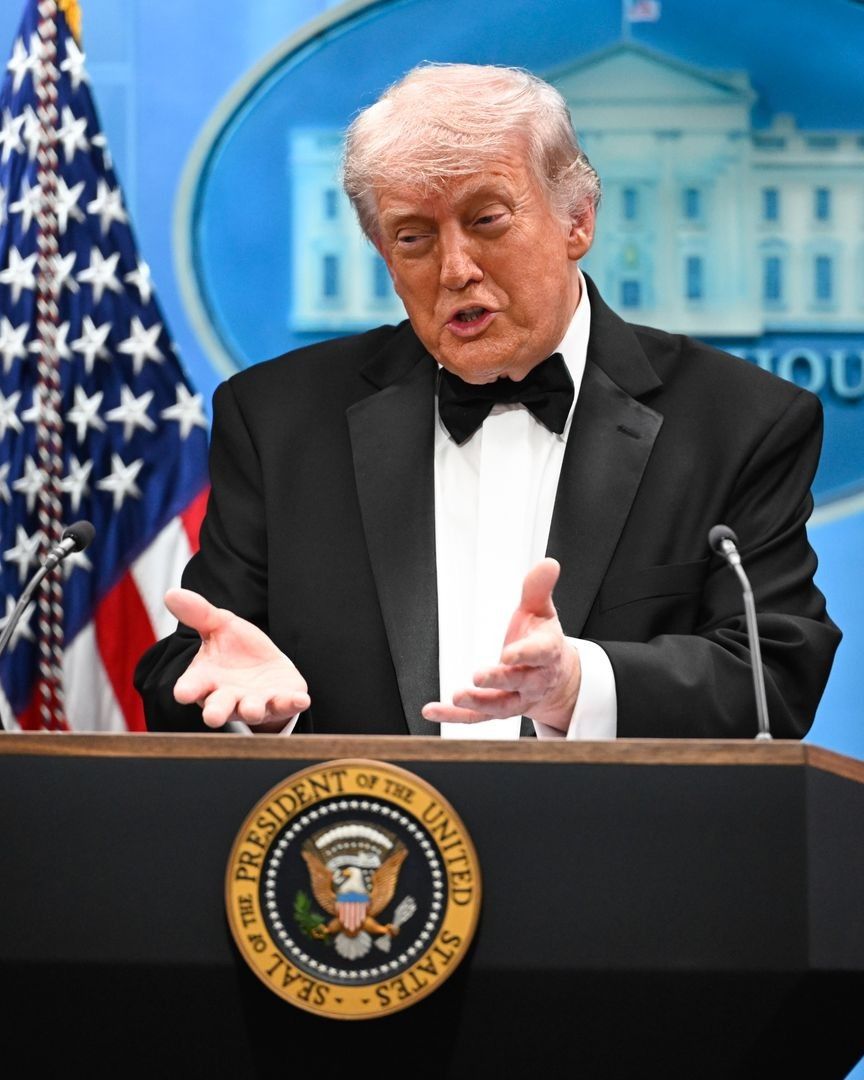

This month, the debate on AI nationalism has erupted in the United States, where the Pentagon has designated Anthropic as a national security risk after the company refused to remove restrictions on the use of its Claude models for mass surveillance of citizens and autonomous weapon systems. It is perhaps the first time the U.S. government has discredited a company for these reasons.

After the blacklisting of Anthropic, OpenAI signed a $200 million contract with the Department of Defense, declaring that operational decisions on these systems belong to the government and not to private companies, and effectively taking over from the company thanks to the absence of the same ethical limits. Meanwhile, Trump had already signed an executive order in December to eliminate state-level AI regulations, whose investments are becoming an economic engine that the administration does not intend to leave in private hands. But what happens when the power of AI becomes a tool of governmental control?

What is AI nationalism?

@carterpcs This AI is officially an enemy of the US government #ai #claude #anthropic #carterpcs #trump I Can Do Anything / Finale - Christopher Lennertz

AI nationalism is a doctrine that argues that every nation should develop and exclusively control its own artificial intelligence technologies to ensure technological sovereignty and protect economic, military, and geopolitical interests. The idea gained international visibility thanks to the essay AI Nationalism published in 2018 by British investor Ian Hogarth, who defined this technology as a strategic resource comparable to nuclear power or oil. At stake is the global influence of individual states.

An example cited by Hogarth is the United Kingdom's sale of DeepMind to Google in 2014 for about 400 million pounds. DeepMind was a leading British AI research lab, and according to the author, it would have been better if the British government had blocked the acquisition or helped keep it independent, to avoid ceding this important asset to an American giant. Had they kept it, the United Kingdom would today have a significant political leverage on the global geopolitical chessboard.

What are recent examples of AI nationalism?

Should we nationalize AI? That is, should we replace xAI, Anthropic, OpenAI, and others with a government organization?

— Daniel Lemire (@lemire) March 15, 2026

Hell no! And let me tell you why it’s a terrible idea.

One of Canada’s leading newspapers is publishing a piece by Bruce Schneier where he calls for the… pic.twitter.com/EJdUTdQcrE

In the USA, AI nationalism is a doctrine that has been widely embraced: for example, the government has blocked exports of advanced chips to China to slow down the Chinese government, which has announced plans to achieve AI leadership by 2030, while countries like France, India, and the United Arab Emirates are investing billions in creating national AI systems and their own technological infrastructures.

And after the December 2025 executive order that aims to nationalize legislation across all U.S. states, an “AI Litigation Task Force” was created to handle the inevitable court cases that will follow, while a law giving the government the power to control production and investments of key companies in cases of national emergency has been invoked as a possible tool to declare AI a priority strategic resource. The debate also includes concerns about data centers, massive facilities that consume even more massive amounts of water and are appearing all over the United States, alarming various communities.

The pros and cons of AI nationalization

It is clear that, aside from concerns about citizens' privacy, the nationalization of AI has clear advantages in the fields of national security and strategic control. In a perfect world (and thus not ours), the state could take over the development of these technologies, eliminating the risk that a handful of mega-companies turn them into a global oligopoly with even worse implications for global stability or collective security.

Again in an ideal world, public control would guarantee greater transparency toward citizens on how data is used and on what the actual applications of the technology are, reducing the danger of abuses hidden behind confidential commercial agreements. Moreover, nationalization would allow the concentration of national resources such as funding, talent, and infrastructure toward a single goal, accelerating technological advancements. In practice, a policy similar to the one that exists for uranium enrichment and the creation of nuclear weapons.

Yet the disadvantages are even more obvious. The idea of a governmental entity in whose hands so much power is concentrated, especially surveillance power, makes the risks of political abuse, censorship, or use for authoritarian purposes simply too evident. Moreover, AI technology develops in a way that is too fast and often collaborative to keep up with the extremely slow administrative bureaucracies, and thus, depending on the state, innovation could proceed quite slowly. Furthermore, acquiring or nationalizing companies valued at hundreds of billions of dollars would require colossal public resources, with the risk of financial shocks, increased debt, or distortions in the labor and investment markets.

The issue, however, is that for a technology based on global diffusion and self-regulating through real-time feedback from millions of users from which it learns, excessive regulations could both block critical investments because the legislative situation is unclear, and delay the evolution of a technology due to laws that are too restrictive for a market that regulates itself, since user feedback forces companies to impose certain safeguards.